SSUlt report – 5th entry

This is why I am still debugging. I will present you the software architecture in its broad ways. Well I am writing debugging, but I am actually rewriting most of the things. And I may have a shot for testing the second version before the end of mission…

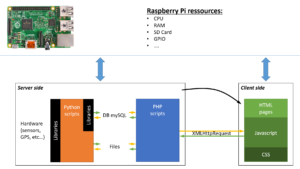

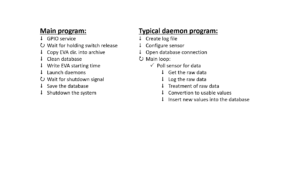

As I have stated, the interface is Web based. So the server and the client are running locally on the same device which is… the Raspberry Pi. Still on the server side Python scripts do the interface between the sensors measurements and the database.

The Web interface is complex to synthesize because it involves of displaying a lot different information. As a broad view of the amount of work on the Web interface, HTML and JavaScript code have a total of lines over 6000… And there are still more pages to write (the interface is not completed yet).

The PHP scripts do the job of being the interface between the database and the request from the client. So it is the smallest part of the work. Still over a 1000 lines of code to select, insert, modify, sort data from the database (and very occasionally from files).

The complexity comes with the Python scripts (more than 4000 lines). Because some of them communicate with sensors they cannot be used in a synchronous loop. For instance, because resources can be used for something else, a GPS position update can take few seconds to be received. In the meantime nothing else would be done except waiting. With just one device, it can be okay. With the dozen I have, waiting for one to reply would be very inefficient especially if all of them have delays between the request of measurement and the reception of the data (which is the case).

Instead, I use daemons scripts to do the job out of the main program. The main program start these daemons at the beginning. After, each daemon is autonomous and responsible for communicating with the device(s) on its protocols of communication (UART, I2C, SPI). When it receives data, it treats the data before putting the numbers into the database and in log files. Treated data goes into the database to be used by the interface, while raw data (can be Hex numbers) goes into log files as well as the errors if any.

This is the bottleneck! If for instance the UART and I2C daemons have to write data into the database at the same time, this will create an undesired lag. Same goes for writing into files. Since the client uses also the resources, sharing the Raspberry Pi limited resources seems to be the key of the impracticable lags of the interface.

In addition, if a daemon crashes, it will not be restarted. Then the rest of the EVA will lose all the data that was supposed to be collected.

When I started this project, I was focusing on the development of every part of it and not on the optimization (because I cannot do optimization on something I do not master). Now I know where to make modifications.

My next version will focus on making sure that the daemons do not write into the database nor into files. Instead the data will be sent back to the main program that will do the database interface and write into the log files periodically (but not at each loop as it is the case in the first version). Also the client will be moved out from the Raspberry Pi duty. The Raspberry Pi will be turned into a WiFi router so that any personal mobile devices (smartphone or tablet) will be able to display the interface by connecting to it. The backpack are going to be upgrade as WiFi hotspot! In the process of recoding most of the software, some modification into the database will imply modifications on the PHP/HTML/JS code as well. In total, over 11000 lines of code will be reviewed/rewritten in the coming weeks.

That’s okay. I have 460 hours to do that before the end of mission… Yes counting sleeping time! As a friend of mine would say: the urgent is done, the extremely urgent is on the pipe, for miracles expect a delay…